The Rise of Generative Engine Optimization

The Shift to Answer Engines

Think about how you search for answers today. When you have a question, do you still scroll through a page of links — or do you type it into ChatGPT, Perplexity, or Gemini and get a straight answer in seconds?

You’re not alone. AI-referred sessions jumped from 17,000 to over 107,000 between January and May 2025 [1], and that traffic behaves nothing like organic search. People ask, get a direct answer, and move on. No results page. No blue links. No clicking through ten tabs.

Google itself is accelerating this. Its AI Mode now generates a full answer instead of a list of links, and 93% of queries in that mode result in zero clicks to any external website [5]. Gartner projects a 25% drop in traditional search volume by the end of 2026 [6]. Grand View Research valued the AI search engine market at $16.28 billion in 2024, projecting it to reach $50.88 billion by 2033 [7].

But here’s what makes this interesting for publishers, not just alarming: a study of 12.3 million website visits found that ChatGPT traffic converts at 14.2% compared to Google organic’s 2.8% [2]. Fewer visits, but five times the purchase intent. The users who do arrive through AI citations are further along in their decision-making. They’re not browsing. They’re acting.

The question is no longer whether this shift matters. It’s whether your content is built to survive the pipeline that decides what gets cited and what gets skipped.

How AI Answer Engines Actually Work

Each query to ChatGPT, Perplexity, or Gemini triggers a retrieval pipeline. How well your content fits that pipeline directly controls whether you get cited at all.

It starts with crawlers. OpenAI runs three: GPTBot gathers training data, OAI-SearchBot builds the searchable index that powers ChatGPT’s live citations, and ChatGPT-User fetches pages when a user explicitly asks for a URL [8]. Anthropic operates a parallel set: ClaudeBot for training, Claude-SearchBot for search indexing, and Claude-User for direct page requests [9]. None of them render JavaScript. If your content loads client-side, these bots see an empty page.

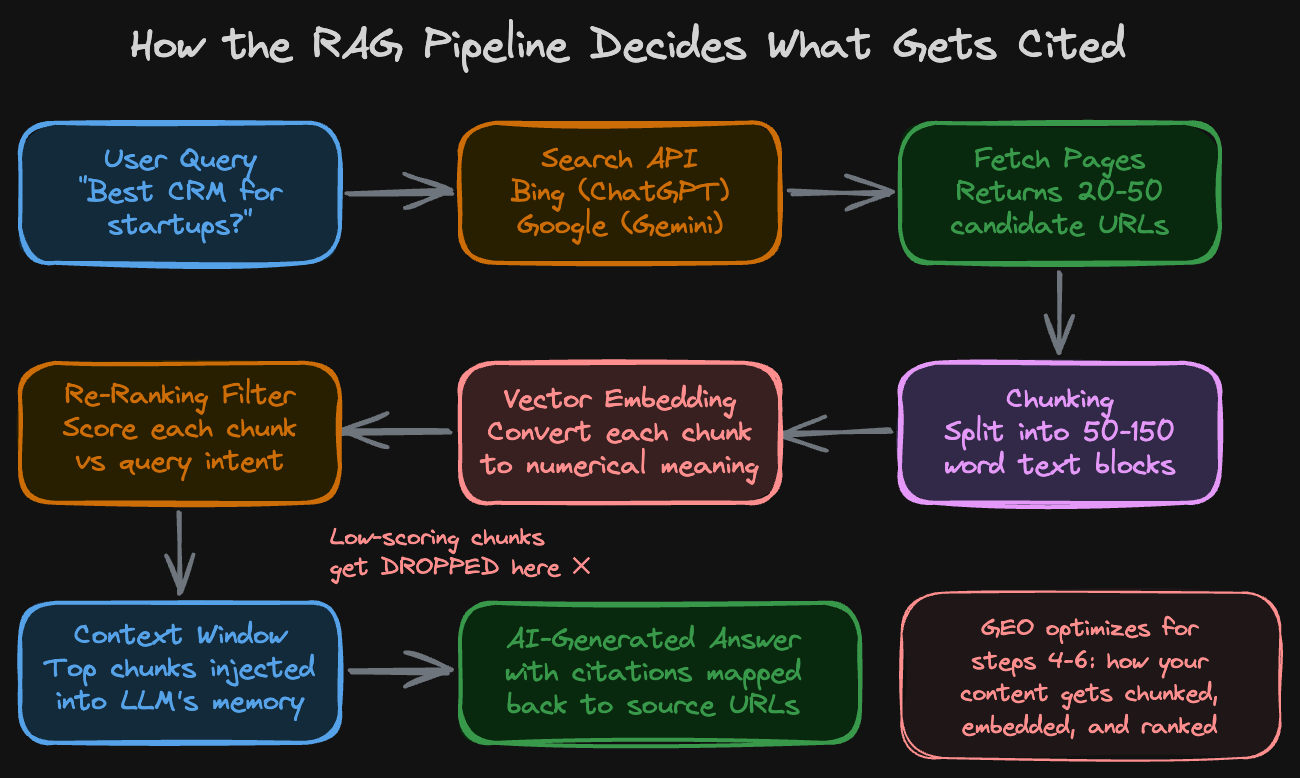

Once a page has been crawled and indexed, the retrieval pipeline kicks in. When a user asks a question, the model’s search layer queries an API - ChatGPT routes through Bing, Gemini through Google. That API returns candidate URLs. The system fetches those pages and runs a chunking pass, splitting each page into text segments of roughly 50-150 words . Each chunk gets converted into a vector embedding - a numerical fingerprint of what that chunk means. Then a re-ranking model steps in: it compares every chunk’s meaning against the original question and assigns a relevance score. Only the top-scoring chunks make it into the model’s context window - the limited text the LLM can actually read before generating its answer. The LLM writes its response from those chunks and maps citations back to the source URLs.

This is Retrieval-Augmented Generation (RAG), and it changes everything about how you should think about content.

A page can hold the #1 position on Google and still get ignored by ChatGPT. In traditional SEO, backlinks and domain reputation push you up the rankings. In the RAG pipeline, none of that matters at the re-ranking stage. What matters is how your content is laid out. A 2,000-word article written as one continuous wall of text with no headings or clear sections gets split into chunks that start mid-sentence and end mid-thought. None of them clearly answer a specific question. The re-ranker scores them low and drops them. The search API found your page, the crawler fetched it, but the LLM never saw your content because no chunk scored high enough.

Researchers from Princeton, Georgia Tech, and the Allen Institute for AI published a peer-reviewed study on this at KDD 2024 [4]. Across multiple benchmarks, GEO techniques - structuring content for generative engine retrieval - boosted visibility in AI-generated responses by up to 40%. The most effective method, adding verifiable statistics and explicit source citations, produced a 115% visibility jump for mid-ranking sites. The sharpest finding: the top-ranked Google result lost 30% of its visibility in generative engine answers. Traditional ranking authority doesn’t transfer. Content specificity and chunk quality do.

What wins citations: self-contained paragraphs that resolve a query without needing surrounding context. Concrete, verifiable data points over broad claims. Structured content with clear H2/H3 headers that give the chunking algorithm natural break points [3].

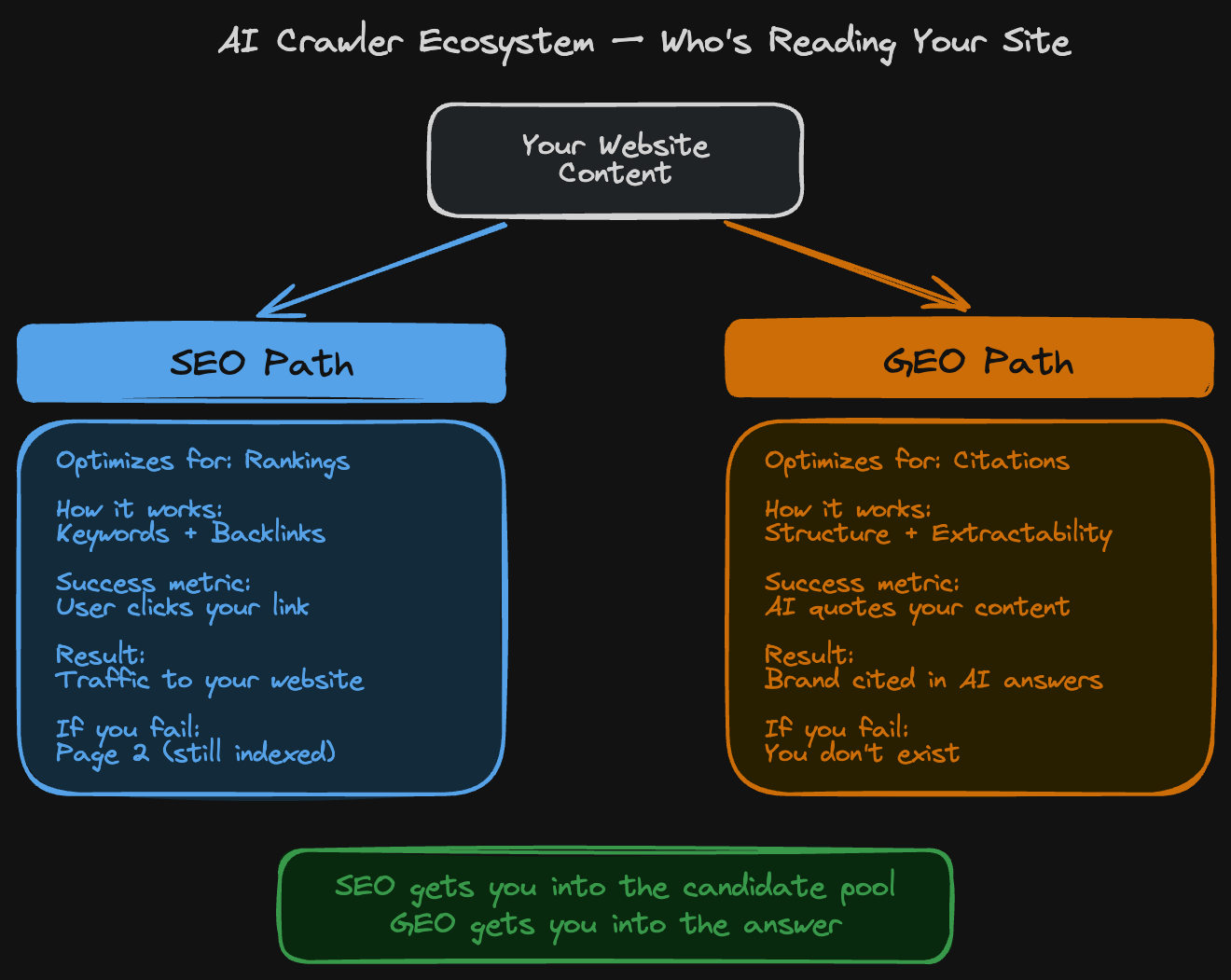

SEO Built the Map. GEO Reads It Differently.

Three contrasts cut to the core of it:

SEO optimizes for clicks. GEO optimizes for citations. In traditional search, you win when someone clicks your blue link on Google. In AI search, you win when ChatGPT or Perplexity quotes your content directly inside its answer. The user reads your words, gets the information they need, and may never visit your website. But your brand name and URL still appear as the attributed source - that’s a citation. It builds trust and recognition even without a click.

SEO runs on keywords and backlinks. GEO runs on how your content is written and organized. In traditional SEO, you repeat target keywords and earn links from other sites to climb the rankings. In GEO, none of that influences the re-ranking filter. What the re-ranker cares about is whether a chunk from your page directly and clearly answers the question being asked. A well-structured paragraph with a specific, verifiable claim beats a keyword-stuffed page every time.

SEO has page two. GEO doesn’t. On Google, ranking on page two means less traffic but you’re still indexed and discoverable. In an AI-generated answer, there’s no second page. The model either mentions you or it doesn’t. If you’re not in the answer, you’re not ranked lower - you simply don’t exist in that conversation.

But here’s what people miss: these aren’t competing strategies. They work as a chain. SEO makes your content discoverable by the search APIs that feed AI tools - without it, ChatGPT’s retrieval pipeline never finds your page in the first place. GEO makes sure that once your page is found and chunked, the re-ranker actually selects it. You need SEO to get into the candidate pool. You need GEO to get into the answer.

Built for Where Attention Is Going

The shift already happened. Users moved from clicking search results to reading AI-generated answers, and the infrastructure followed. Google, OpenAI, and Anthropic are all building systems that crawl, chunk, and cite - and none of them care about your keyword density or backlink profile.

What they care about is whether your content answers a question clearly enough to survive the retrieval pipeline. That means structured pages, verifiable claims, and self-contained paragraphs that a re-ranker can score high on their own.

That’s why we’re building Brank - to help brands structure their content for the way people actually find information now. If you want your content cited where attention is going, not just ranked where it used to be, reach out at

Visit - Brank.ai

Contact - tikesh@brank.ai

Sources

- Previsible — AI SEO Study 2025: AI Traffic Report (analysis of 19 GA4 properties, Jan-May 2025)

- Superprompt — AI Search Traffic Converts 5x Better Than Google (12.3M visits across 347 businesses)

- Ahrefs — How to Earn LLM Citations

- IIT Delhi, Princeton, Georgia Tech, Allen AI — GEO: Generative Engine Optimization (KDD 2024)

- DemandSage — AI Overviews Statistics, 2026

- Exposure Ninja — AI Search Statistics for 2026 (citing Gartner)

- Grand View Research — AI Search Engine Market Report

- OpenAI — Overview of OpenAI Crawlers

- Anthropic — Does Anthropic crawl data from the web?

- How perplexity built An AI google https://blog.bytebytego.com/p/how-perplexity-built-an-ai-google